Code Assistant

Local code completion, debugging, and documentation generation. Keep proprietary code private while getting AI assistance.

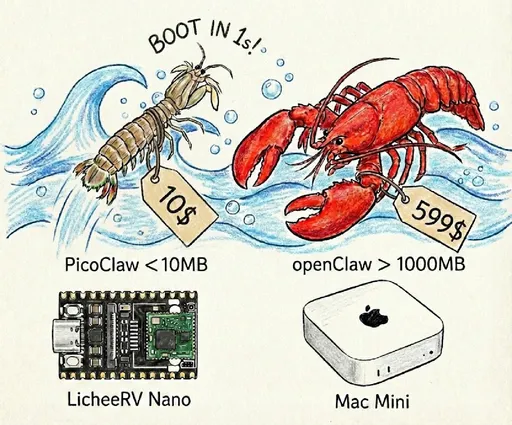

Single binary · <10MB RAM · 1s startup · Runs on $10 hardware

PicoClaw is an ultra-lightweight personal AI assistant written in Go. Deploy a single binary on any device — from a $10 Raspberry Pi to a cloud server — and get a fully functional AI assistant with less than 10MB of RAM and under 1 second startup time. 99% smaller and 400x faster than comparable solutions.

<10MB RAM footprint. Single self-contained binary with zero external dependencies. Runs on $10 hardware.

Boots in under 1 second even on a 0.6GHz single-core processor. Written in Go for maximum efficiency.

Raspberry Pi, RISC-V, ARM64, x86_64, Android (APK or Termux), Docker — one binary covers all platforms.

Telegram, Discord, Slack, DingTalk, Feishu, WeCom, LINE, QQ and more. Connect your AI to any platform.

OpenAI, Claude, DeepSeek, Doubao and more. Switch providers freely with a single config change.

Your data stays on your device. No cloud dependency. Full control over your AI assistant.

From coding to research, PicoClaw handles it all — on any hardware.

Develop · Deploy · Scale

Schedule · Automate · Remember

Discover · Insights · Trends

From $10 RISC-V boards to Raspberry Pi to Android phones — one binary, all platforms.

Rewritten in Go for 10x performance. See how PicoClaw compares.

| Metric | OpenClaw | NanoBot | PicoClaw |

|---|---|---|---|

| Language | TypeScript | Python | Go |

| Memory Usage | >100MB | >100MB | <10MB |

| Startup Time | >30s | >30s | <1s |

| Min Hardware Cost | ~$50 | ~$50 | ~$10 |

From personal productivity to professional workflows, PicoClaw adapts to your needs.

Local code completion, debugging, and documentation generation. Keep proprietary code private while getting AI assistance.

Write blog posts, emails, and documents with AI. All drafts and ideas stay on your device, fully private.

Analyze sensitive business data locally. No data leaves your infrastructure, ensuring complete confidentiality.

Manage tasks with scheduled commands, cron automation, and multi-platform bot integration in your daily workflow.

Summarize papers, answer questions, and organize knowledge. Built-in web search and long-term memory for context continuity.

Gateway mode turns PicoClaw into an AI backend. Connect any chat platform via MCP protocol and REST API.

PicoClaw is an ultra-lightweight personal AI assistant written in Go. It deploys as a single binary with less than 10MB memory usage, supports 16+ chat platforms (Telegram, Discord, Slack, QQ, DingTalk, etc.), and runs on everything from a $10 Raspberry Pi to cloud servers.

PicoClaw is self-hosted — your data stays on your device. It connects to LLM providers (OpenAI, Claude, DeepSeek, etc.) via API, but all conversation history and configuration remain local. It's also designed for ultra-low resource environments that cloud-based solutions can't serve.

Minimum: any device with 64MB RAM and an internet connection for LLM API calls. Recommended: 512MB RAM. PicoClaw runs on x86_64, ARM64, ARMv7, ARMv6, RISC-V, and MIPS architectures. A $10 Raspberry Pi Zero works perfectly.

OpenAI (GPT-4o, o1), Anthropic (Claude), DeepSeek, Google Gemini, Mistral, Volcengine (Doubao), Qwen, Minimax, Moonshot (Kimi), Cerebras, Perplexity, Ollama (local), and any OpenAI-compatible API.

Yes! On Android, just download and install the APK — no root or special setup needed. You can also run PicoClaw via Termux if you prefer the command-line approach. See the Android guide for details.

Yes, PicoClaw is fully open source under the MIT-compatible license. The software is free. You only pay for the LLM API usage (e.g., OpenAI, Claude) based on your own consumption.

Subscribe for news and product updates.

International users can subscribe via Google Forms.

Subscribe via Google Form中国大陆用户可通过飞书表单订阅。

Subscribe via FeishuJoin our Discord server for discussions and support.

Join DiscordSubmit a PR, then apply to join the developer group: